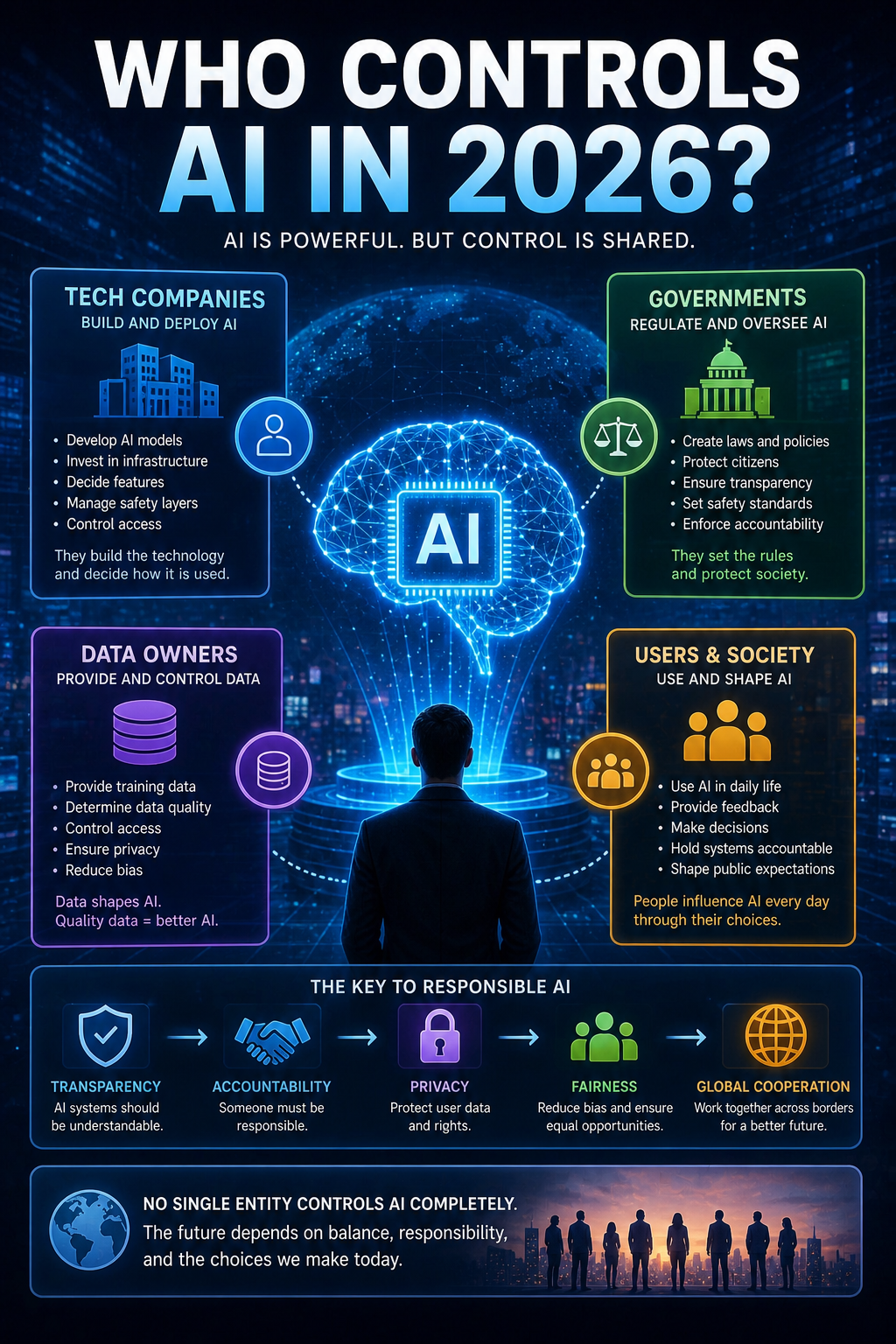

Who Controls AI in 2026?

Introduction Artificial Intelligence is now part of everyday life. It helps us search for information, recommend content, automate work, analyze data, and support decision-making in businesses, governments, and public services. As AI becomes more powerful and more present, one important question naturally follows: 👉 Who actually controls AI? Is it the companies that build it? Governments that regulate it? Or the people who use it every day? In 2026, the answer is more complex than it may first appear.

The Companies Building AI

Today, many of the most advanced AI systems are developed by major technology companies.

These organizations invest enormous resources in:

Research and development

Computing infrastructure

Large-scale data processing

AI model training

Security and deployment

Because of this, private companies currently have a major influence over how advanced AI systems are created and released.

They often decide:

What the models can do

How they are deployed

What safety rules are included

Which users or industries can access them

In many ways, companies are currently the main builders of modern AI.

The Role of Governments and Regulation

As AI becomes more influential, governments are becoming more involved.

In 2026, many countries are working on regulations that address:

Privacy and data protection

Transparency

Safety standards

Responsible deployment

Accountability for harmful outcomes

The goal is not to stop innovation.

The goal is to ensure AI develops in ways that protect society.

Governments do not build most AI systems directly, but they increasingly define the rules under which AI operates.

The People Who Use AI

Control also exists at the user level.

Every day, millions of people influence AI through:

The way they use it

The feedback they provide

The tasks they automate

The decisions they accept or reject

AI does not operate in isolation.

Humans decide:

Whether to trust its output

When to verify results

How much responsibility to give automated systems

This means users also play an important role in shaping AI’s real-world impact.

Data: One of the Biggest Sources of Control

AI learns from data.

That means whoever controls data often has significant influence over AI.

Important questions include:

What data is used to train models?

Is the data accurate?

Is it balanced?

Who owns it?

Who is allowed to access it?

Data quality strongly affects AI behavior.

Poor or biased data can lead to poor or biased results.

In many ways, data governance is one of the most important forms of AI control.

Can AI Control Itself?

This is often misunderstood.

AI can:

Analyze patterns

Make predictions

generate responses

automate tasks

But AI does not independently define goals, values, or responsibility.

It does not decide what society should want.

Humans still define:

Objectives

Boundaries

Priorities

Risk tolerance

AI can operate with increasing autonomy in specific environments — but it does not govern itself in the human sense.

The Challenge of Global Control

One major difficulty is that AI is global.

Technology moves across:

Countries

Industries

Platforms

Legal systems

Different regions may have different rules, priorities, and standards.

This creates challenges:

How do we regulate globally used systems?

How do we balance innovation with safety?

Who sets international standards?

These questions are becoming increasingly important.

What Control Should Look Like

The most effective AI control is not about one single group having all power.

It requires balance.

Companies

build and improve the technology.

Governments

set rules, standards, and accountability.

Users

apply judgment and responsible use.

Experts and society

help define ethical boundaries.

Good control is not about stopping progress.

It is about guiding it responsibly.

What This Means for the Future

As AI becomes more capable, the question of control will become even more important.

In the coming years, we will likely see:

More regulation

Stronger governance frameworks

Better transparency requirements

More public discussion about responsibility and trust

The future of AI will depend not only on what machines can do…

👉 but on how humans choose to manage that power.

Conclusion

So, who controls AI in 2026?

The honest answer is:

👉 No single person or organization controls it completely.

Control is shared between:

The companies building AI

Governments creating rules

The data shaping systems

The people using the technology

Artificial Intelligence is powerful.

But the direction it takes will ultimately depend on human decisions.

And that may be the most important part of all.