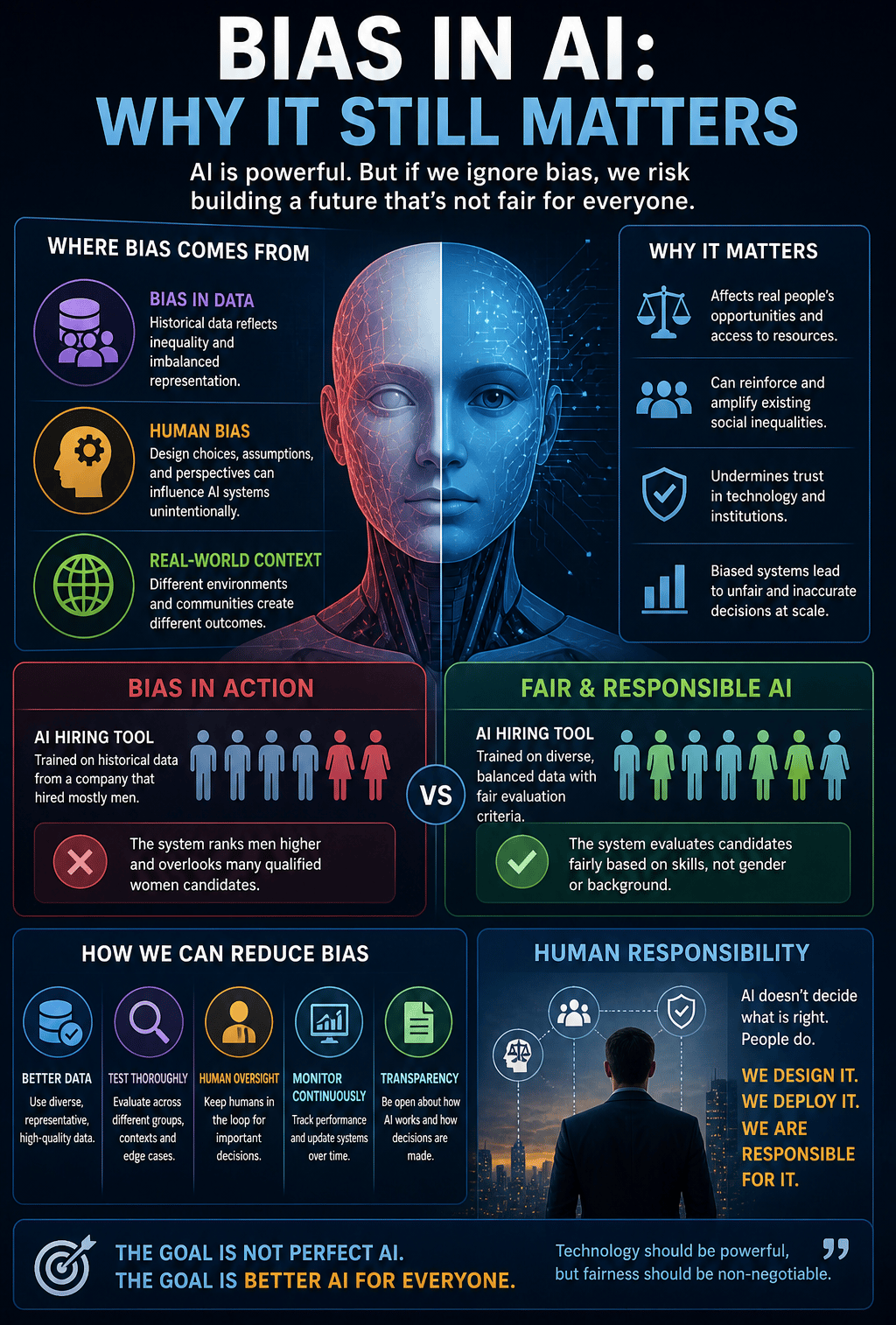

Bias in AI: Why It Still Matters

Introduction Artificial Intelligence is often seen as objective, logical, and data-driven. Because AI relies on algorithms and large datasets, many people assume it makes neutral decisions without human influence. But that is not always true. AI systems can reflect the biases present in the data they learn from, the assumptions built into their design, and the way they are deployed in the real world. That is why bias in AI still matters — and why it remains one of the most important discussions in the future of technology.

Where Bias Can Come From

There are several common sources of AI bias.

1. Training Data

AI depends heavily on data.

If training data is incomplete or unbalanced, the model may learn distorted patterns.

For example:

Some groups may be underrepresented

Historical decisions may already contain bias

Certain situations may not appear enough in the data

2. Human Design Choices

People decide:

What data to use

Which goals to optimize

How the system should behave

Which metrics matter most

Even with good intentions, design decisions can unintentionally introduce bias.

3. Real-World Deployment

A model may perform well in testing but behave differently in real-world environments.

Different populations, cultures, languages, and contexts can affect outcomes.

Why It Matters

Bias in AI matters because AI increasingly influences important areas of life.

Examples include:

Hiring and recruitment

Banking and lending

Healthcare

Education

Security systems

Public services

If biased systems are used at scale, they can affect thousands or even millions of people.

The impact becomes larger than a single human decision.

Bias Does Not Always Look Obvious

One important point is that bias is not always easy to notice.

Sometimes AI can appear accurate overall while still performing poorly for specific groups or situations.

For example:

High average accuracy can hide uneven results

Rare cases may be ignored

Certain users may experience worse outcomes without realizing why

This makes careful evaluation essential.

Can Bias Be Eliminated Completely?

Realistically, probably not.

Why?

Because:

Data reflects imperfect human societies

Context changes over time

New environments create new challenges

The goal is not perfection.

The goal is continuous improvement, transparency, and responsible oversight.

How Bias Can Be Reduced

There are practical ways to reduce bias.

Use better and more representative data

Diverse and high-quality data helps improve fairness.

Test systems in different scenarios

AI should be evaluated across multiple groups, environments, and edge cases.

Keep humans involved

Human review remains essential for important decisions.

Monitor systems after deployment

Bias can appear over time, so AI needs ongoing monitoring and adjustment.

Build transparent processes

People should understand how important AI decisions are made.

Why Human Responsibility Still Matters

A common misunderstanding is that once an AI system is deployed, responsibility shifts to the machine.

That is not true.

Humans still remain responsible for:

The design

The training data

The deployment choices

The monitoring process

The impact on users

AI does not create ethical responsibility.

People do.

What This Means for the Future

As AI becomes more integrated into society, bias will remain an important issue.

The future will require:

Better governance

Stronger testing standards

More transparency

More public discussion

More responsible development practices

Trust in AI will depend not only on what systems can do…

👉 but also on whether people believe they are fair.

Conclusion

Bias in AI still matters because AI increasingly shapes real decisions in the real world.

Even advanced systems can inherit imperfect data, human assumptions, and hidden imbalances.

The solution is not to reject AI.

It is to build it more carefully.

Because the future of Artificial Intelligence should not only be about speed, power, and automation…

👉 It should also be about fairness, responsibility, and trust.